关键点检测项目代码开源了!

本文通过自建手势数据集,利用YOLOv5s检测,然后通过开源数据集训练squeezenet进行手部关键点预测,最后通过指间的夹角算法来判断具体的手势,并显示出来。文章第四部分为用C++实现整体的ncnn推理(代码较长,可先马后看)

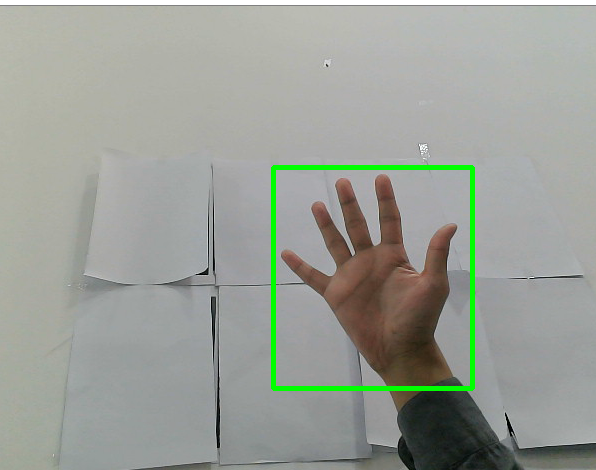

一、YOLOV5训练手部检测

训练及部署思路类似表情识别,需要将handpose数据集标签改成一类,只检测手部,简化流程,更易上手。

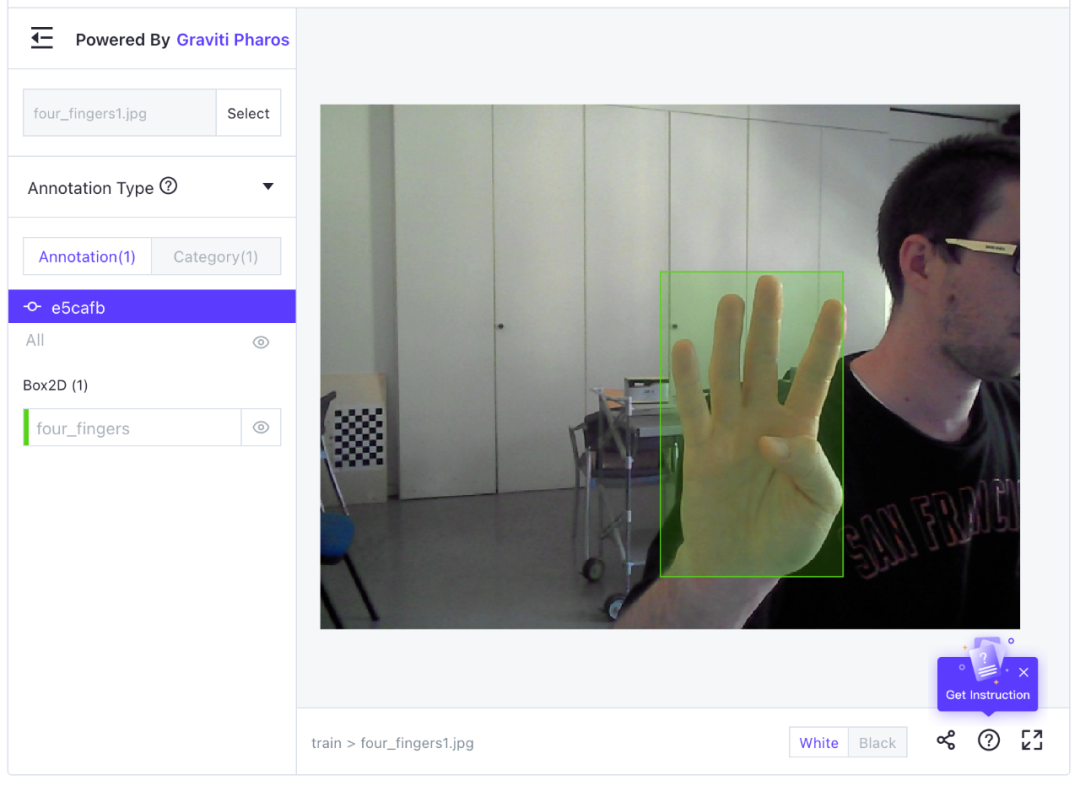

此部分数据集来源格物钛 https://gas.graviti.cn/dataset/datawhale/HandPose,具体的效果如图:

本教程所用训练环境: 系统环境:Ubuntu16.04 cuda版本:10.2 cudnn版本:7.6.5 pytorch版本:1.6.0 python版本:3.8 部署环境: 编译器:vs2015 依赖库:opencv ncnn 外设:普通USB摄像头

二、手部关节点检测

1、依赖环境

和YOLOV5训练手部检测一致。

2、检测数据集准备

该数据集包括网络图片以及数据集<Large-scale Multiview 3D Hand Pose Dataset> 筛选动作重复度低的图片,进行制作大概有5w张数据样本。其中<Large-scale Multiview 3D Hand Pose Dataset>数据集的官网地址:http://www.rovit.ua.es/dataset/mhpdataset/,其中标注文件示例如图2所示

制作好可以直接训练的数据集放在了开源数据平台格物钛:https://gas.graviti.com/dataset/datawhale/HandPoseKeyPoints

3、数据集在线使用

步骤1:安装格物钛平台SDK

pip install tensorbay

步骤2: 数据预处理

要使用已经处理好可以直接训练的数据集,步骤如下:

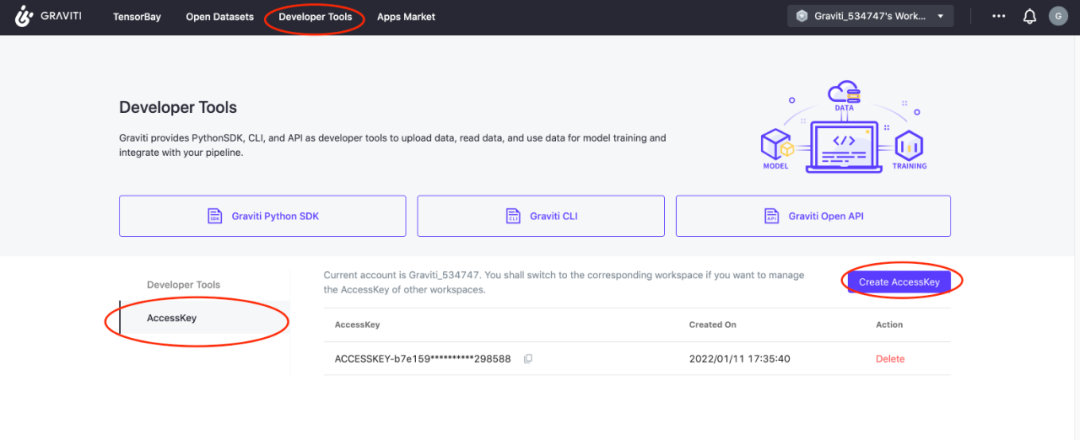

a. 打开本文对应数据集链接 https://gas.graviti.cn/dataset/datawhale/HandPose,在数据集页面,fork数据集到自己账户下;

b. 点击网页上方开发者工具 --> AccessKey --> 新建一个AccessKey --> 复制这个Key:KEY = 'Acces...........'

我们可以在不下载数据集的情况下,通过格物钛进行数据预处理,并将结果保存在本地。下面以使用HandPose数据集为例,使用HandPoseKeyPoints数据集操作同HandPose操作一样。

数据集开源地址:

https://gas.graviti.com/dataset/datawhale/HandPoseKeyPoints

完整项目代码:

https://github.com/datawhalechina/HandPoseKeyPoints

import numpy as np

from PIL import Image

from tensorbay import GAS

from tensorbay.dataset import Dataset

def read_gas_image(data):

with data.open() as fp:

image = Image.open(fp)

image.load()

return np.array(image)

# Authorize a GAS client.

gas = GAS('填入你的AccessKey')

# Get a dataset.

dataset = Dataset("HandPose", gas)dataset.enable_cache("data")

# List dataset segments.

segments = dataset.keys()

# Get a segment by name

segment = dataset["train"]

for data in segment:

# 图片数据

image = read_gas_image(data)

# 标签数据

# Use the data as you like.

for label_box2d in data.label.box2d:

xmin = label_box2d.xmin

ymin = label_box2d.ymin

xmax = label_box2d.xmax

ymax = label_box2d.ymax

box2d_category = label_box2d.category

break

数据集页面可视化效果:

#数据集划分

print(segments)

# ("train",'val')

print(len(dataset["train"]), "images in train dataset")

print(len(dataset["val"]), "images in valid dataset")

# 1306 images in train dataset

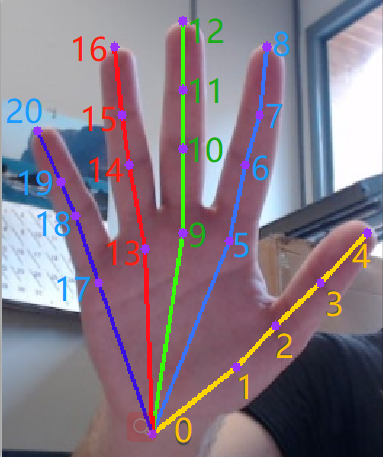

# 14 images in valid dataset4、关节点检测原理

关节点检测pipeline流程是:

1)输入图片对应手部的42个关节点坐标,

2)整个网络的backbone可以是任何分类网络,我这里采用的是squeezenet,然后损失函数是wingloss。

3)整个过程就是输入原图经过squeezenet网路计算出42个坐标值,然后通过wingloss进行回归计算更新权重,最后达到指定阈值,得出最终模型。

5、手部关节点训练

手部关节点算法采用开源代码参考地址:https://gitcode.net/EricLee/handpose_x

1)预训练模型

预训练模型在上述链接中有相应的网盘链接,可以直接下载。如果不想用预训练模型,可以直接从原始分类网络的原始权重开始训练。

2)模型的训练

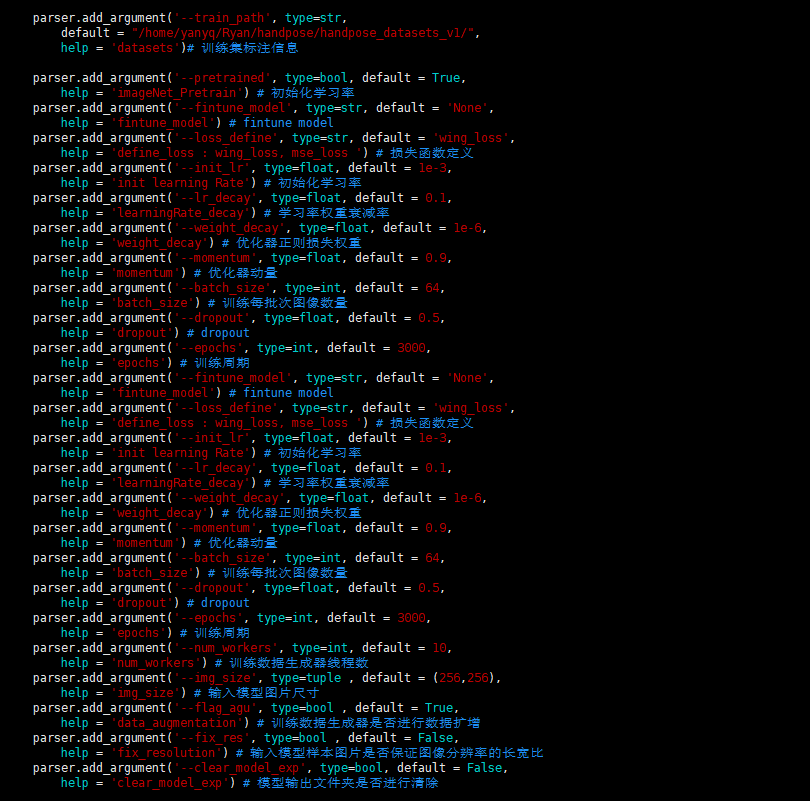

以下是训练网络指定参数解释,其意义直接看图中注释就可以了。

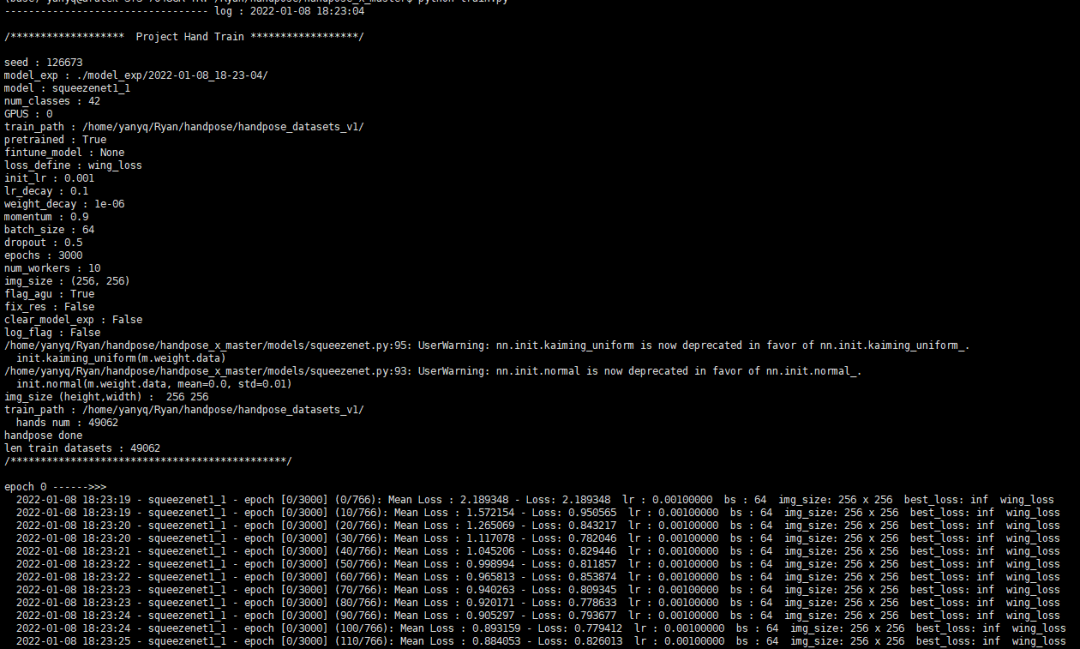

训练只需要运行训练命令,指定自己想要指定的参数就可以跑起来了,如下图:

6、手部关节点模型转换

1)安装依赖库

pip install onnx coremltools onnx-simplifier

2)导出onnx模型

python model2onnx.py --model_path squeezenet1_1-size-256-loss-wing_loss-model_epoch-2999.pth --model squeezenet1_1

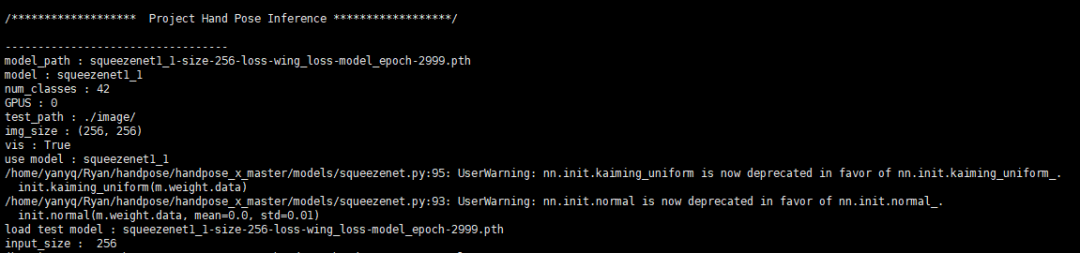

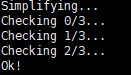

会出现如下图所示

其中model2onnx.py文件是在上述链接工程目录下的。此时当前文件夹下会出现一个相应的onnx模型export。

3)用onnx-simplifer简化模型

为什么要简化?

因为在训练完深度学习的pytorch或者tensorflow模型后,有时候需要把模型转成onnx,但是很多时候,很多节点比如cast节点,Identity这些节点可能都不需要,需要进行简化,这样会方便把模型转成ncnn mnn等端侧部署模型格式。

python -m onnxsim squeezenet1_1_size-256.onnx squeezenet1_1_sim.onnx

会出现下图:

上述过程完成后就生成了简化版本的模型squeezenet1_1_sim.onnx。

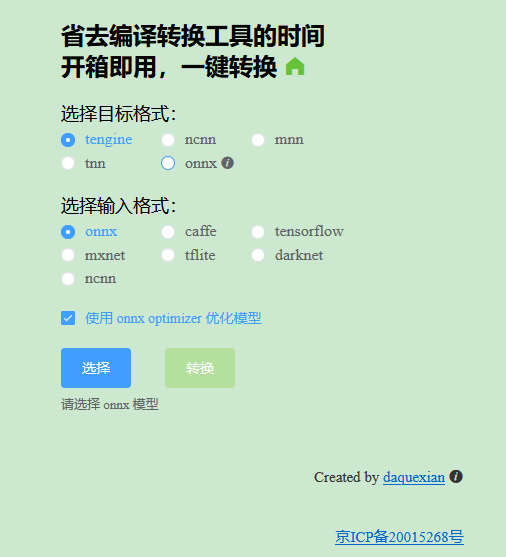

4)把检测模型转换成ncnn模型

可以直接利用网页在线版本转换模型,地址:https://convertmodel.com/ 页面如图:

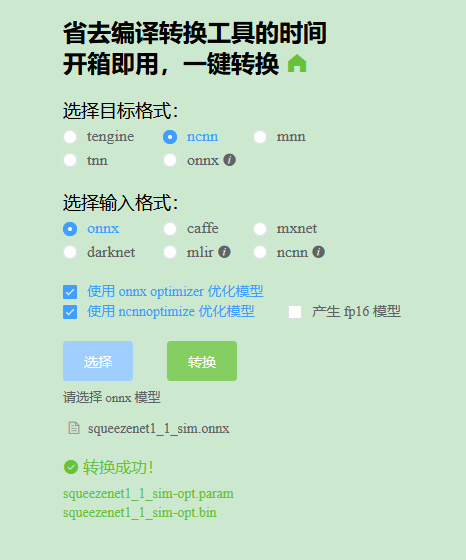

选择目标格式ncnn,选择输入格式onnx,点击选择,选择本地的简化版本的模型,然后选择转换,可以看到转换成功,下面两个就是转换成功的模型文件,如图。

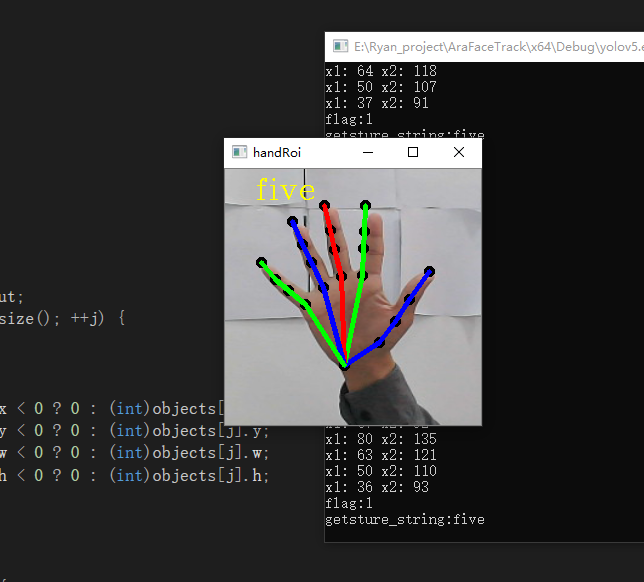

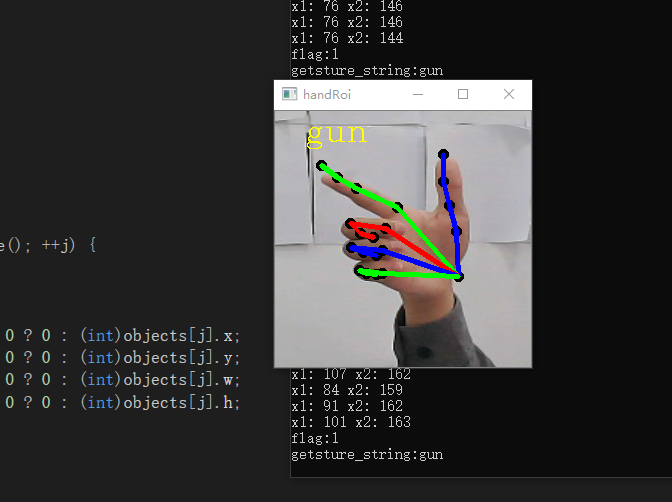

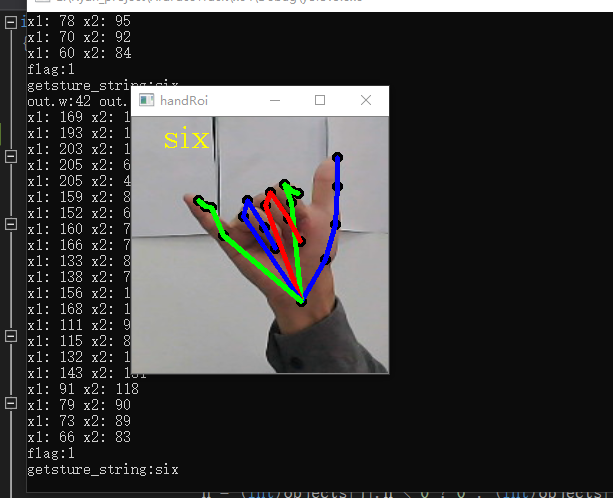

三、利用关节点手势识别算法

通过对检测到的手部关节点之间的角度计算,可以实现简单的手势识别。例如:计算大拇指向量0-2和3-4之间的角度,它们之间的角度大于某一个角度阈值(经验值)定义为弯曲,小于某一个阈值(经验值)为伸直。具体效果如下面三张图。

四、工程推理部署整体实现

此关节点手势识别的整体过程总结:首先是利用目标检测模型检测到手的位置,然后利用手部关节点检测模型,检测手部关节点具体位置,绘制关节点,以及关节点之间的连线。再利用简单的向量之间角度进行手势识别。

整体的ncnn推理C++ 代码实现:

#include <string>

#include <vector>

#include "iostream"

#include<cmath>

// ncnn

#include "ncnn/layer.h"

#include "ncnn/net.h"

#include "ncnn/benchmark.h"

#include "opencv2/core/core.hpp"

#include "opencv2/highgui/highgui.hpp"

#include <opencv2/imgproc.hpp>

#include "opencv2/opencv.hpp"

using namespace std;

using namespace cv;

static ncnn::UnlockedPoolAllocator g_blob_pool_allocator;

static ncnn::PoolAllocator g_workspace_pool_allocator;

static ncnn::Net yolov5;

static ncnn::Net hand_keyPoints;

class YoloV5Focus : public ncnn::Layer

{

public:

YoloV5Focus()

{

one_blob_only = true;

}

virtual int forward(const ncnn::Mat& bottom_blob, ncnn::Mat& top_blob, const ncnn::Option& opt) const

{

int w = bottom_blob.w;

int h = bottom_blob.h;

int channels = bottom_blob.c;

int outw = w / 2;

int outh = h / 2;

int outc = channels * 4;

top_blob.create(outw, outh, outc, 4u, 1, opt.blob_allocator);

if (top_blob.empty())

return -100;

#pragma omp parallel for num_threads(opt.num_threads)

for (int p = 0; p < outc; p++)

{

const float* ptr = bottom_blob.channel(p % channels).row((p / channels) % 2) + ((p / channels) / 2);

float* outptr = top_blob.channel(p);

for (int i = 0; i < outh; i++)

{

for (int j = 0; j < outw; j++)

{

*outptr = *ptr;

outptr += 1;

ptr += 2;

}

ptr += w;

}

}

return 0;

}

};

DEFINE_LAYER_CREATOR(YoloV5Focus)

struct Object

{

float x;

float y;

float w;

float h;

int label;

float prob;

};

static inline float intersection_area(const Object& a, const Object& b)

{

if (a.x > b.x + b.w || a.x + a.w < b.x || a.y > b.y + b.h || a.y + a.h < b.y)

{

// no intersection

return 0.f;

}

float inter_width = std::min(a.x + a.w, b.x + b.w) - std::max(a.x, b.x);

float inter_height = std::min(a.y + a.h, b.y + b.h) - std::max(a.y, b.y);

return inter_width * inter_height;

}

static void qsort_descent_inplace(std::vector<Object>& faceobjects, int left, int right)

{

int i = left;

int j = right;

float p = faceobjects[(left + right) / 2].prob;

while (i <= j)

{

while (faceobjects[i].prob > p)

i++;

while (faceobjects[j].prob < p)

j--;

if (i <= j)

{

std::swap(faceobjects[i], faceobjects[j]);

i++;

j--;

}

}

#pragma omp parallel sections

{

#pragma omp section

{

if (left < j) qsort_descent_inplace(faceobjects, left, j);

}

#pragma omp section

{

if (i < right) qsort_descent_inplace(faceobjects, i, right);

}

}

}

static void qsort_descent_inplace(std::vector<Object>& faceobjects)

{

if (faceobjects.empty())

return;

qsort_descent_inplace(faceobjects, 0, faceobjects.size() - 1);

}

static void nms_sorted_bboxes(const std::vector<Object>& faceobjects, std::vector<int>& picked, float nms_threshold)

{

picked.clear();

const int n = faceobjects.size();

std::vector<float> areas(n);

for (int i = 0; i < n; i++)

{

areas[i] = faceobjects[i].w * faceobjects[i].h;

}

for (int i = 0; i < n; i++)

{

const Object& a = faceobjects[i];

int keep = 1;

for (int j = 0; j < (int)picked.size(); j++)

{

const Object& b = faceobjects[picked[j]];

float inter_area = intersection_area(a, b);

float union_area = areas[i] + areas[picked[j]] - inter_area;

// float IoU = inter_area / union_area

if (inter_area / union_area > nms_threshold)

keep = 0;

}

if (keep)

picked.push_back(i);

}

}

static inline float sigmoid(float x)

{

return static_cast<float>(1.f / (1.f + exp(-x)));

}

static void generate_proposals(const ncnn::Mat& anchors, int stride, const ncnn::Mat& in_pad, const ncnn::Mat& feat_blob, float prob_threshold, std::vector<Object>& objects)

{

const int num_grid = feat_blob.h;

int num_grid_x;

int num_grid_y;

if (in_pad.w > in_pad.h)

{

num_grid_x = in_pad.w / stride;

num_grid_y = num_grid / num_grid_x;

}

else

{

num_grid_y = in_pad.h / stride;

num_grid_x = num_grid / num_grid_y;

}

const int num_class = feat_blob.w - 5;

const int num_anchors = anchors.w / 2;

for (int q = 0; q < num_anchors; q++)

{

const float anchor_w = anchors[q * 2];

const float anchor_h = anchors[q * 2 + 1];

const ncnn::Mat feat = feat_blob.channel(q);

for (int i = 0; i < num_grid_y; i++)

{

for (int j = 0; j < num_grid_x; j++)

{

const float* featptr = feat.row(i * num_grid_x + j);

// find class index with max class score

int class_index = 0;

float class_score = -FLT_MAX;

for (int k = 0; k < num_class; k++)

{

float score = featptr[5 + k];

if (score > class_score)

{

class_index = k;

class_score = score;

}

}

float box_score = featptr[4];

float confidence = sigmoid(box_score) * sigmoid(class_score);

if (confidence >= prob_threshold)

{

float dx = sigmoid(featptr[0]);

float dy = sigmoid(featptr[1]);

float dw = sigmoid(featptr[2]);

float dh = sigmoid(featptr[3]);

float pb_cx = (dx * 2.f - 0.5f + j) * stride;

float pb_cy = (dy * 2.f - 0.5f + i) * stride;

float pb_w = pow(dw * 2.f, 2) * anchor_w;

float pb_h = pow(dh * 2.f, 2) * anchor_h;

float x0 = pb_cx - pb_w * 0.5f;

float y0 = pb_cy - pb_h * 0.5f;

float x1 = pb_cx + pb_w * 0.5f;

float y1 = pb_cy + pb_h * 0.5f;

Object obj;

obj.x = x0;

obj.y = y0;

obj.w = x1 - x0;

obj.h = y1 - y0;

obj.label = class_index;

obj.prob = confidence;

objects.push_back(obj);

}

}

}

}

}

extern "C" {

void release()

{

fprintf(stderr, "YoloV5Ncnn finished!");

}

int init_handKeyPoint() {

ncnn::Option opt;

opt.lightmode = true;

opt.num_threads = 4;

opt.blob_allocator = &g_blob_pool_allocator;

opt.workspace_allocator = &g_workspace_pool_allocator;

opt.use_packing_layout = true;

fprintf(stderr, "handKeyPoint init!\n");

hand_keyPoints.opt = opt;

int ret_hand = hand_keyPoints.load_param("squeezenet1_1.param"); //squeezenet1_1 resnet_50

if (ret_hand != 0) {

std::cout << "ret_hand:" << ret_hand << std::endl;

}

ret_hand = hand_keyPoints.load_model("squeezenet1_1.bin"); //squeezenet1_1 resnet_50

if (ret_hand != 0) {

std::cout << "ret_hand:" << ret_hand << std::endl;

}

return 0;

}

int init()

{

fprintf(stderr, "YoloV5sNcnn init!\n");

ncnn::Option opt;

opt.lightmode = true;

opt.num_threads = 4;

opt.blob_allocator = &g_blob_pool_allocator;

opt.workspace_allocator = &g_workspace_pool_allocator;

opt.use_packing_layout = true;

yolov5.opt = opt;

yolov5.register_custom_layer("YoloV5Focus", YoloV5Focus_layer_creator);

// init param

{

int ret = yolov5.load_param("yolov5s.param");

if (ret != 0)

{

std::cout << "ret= " << ret << std::endl;

fprintf(stderr, "YoloV5Ncnn, load_param failed");

return -301;

}

}

// init bin

{

int ret = yolov5.load_model("yolov5s.bin");

if (ret != 0)

{

fprintf(stderr, "YoloV5Ncnn, load_model failed");

return -301;

}

}

return 0;

}

int detect(cv::Mat img, std::vector<Object> &objects)

{

double start_time = ncnn::get_current_time();

const int target_size = 320;

const int width = img.cols;

const int height = img.rows;

int w = img.cols;

int h = img.rows;

float scale = 1.f;

if (w > h)

{

scale = (float)target_size / w;

w = target_size;

h = h * scale;

}

else

{

scale = (float)target_size / h;

h = target_size;

w = w * scale;

}

cv::resize(img, img, cv::Size(w, h));

ncnn::Mat in = ncnn::Mat::from_pixels(img.data, ncnn::Mat::PIXEL_BGR2RGB, w, h);

int wpad = (w + 31) / 32 * 32 - w;

int hpad = (h + 31) / 32 * 32 - h;

ncnn::Mat in_pad;

ncnn::copy_make_border(in, in_pad, hpad / 2, hpad - hpad / 2, wpad / 2, wpad - wpad / 2, ncnn::BORDER_CONSTANT, 114.f);

{

const float prob_threshold = 0.4f;

const float nms_threshold = 0.51f;

const float norm_vals[3] = { 1 / 255.f, 1 / 255.f, 1 / 255.f };

in_pad.substract_mean_normalize(0, norm_vals);

ncnn::Extractor ex = yolov5.create_extractor();

ex.input("images", in_pad);

std::vector<Object> proposals;

{

ncnn::Mat out;

ex.extract("output", out);

ncnn::Mat anchors(6);

anchors[0] = 10.f;

anchors[1] = 13.f;

anchors[2] = 16.f;

anchors[3] = 30.f;

anchors[4] = 33.f;

anchors[5] = 23.f;

std::vector<Object> objects8;

generate_proposals(anchors, 8, in_pad, out, prob_threshold, objects8);

proposals.insert(proposals.end(), objects8.begin(), objects8.end());

}

{

ncnn::Mat out;

ex.extract("771", out);

ncnn::Mat anchors(6);

anchors[0] = 30.f;

anchors[1] = 61.f;

anchors[2] = 62.f;

anchors[3] = 45.f;

anchors[4] = 59.f;

anchors[5] = 119.f;

std::vector<Object> objects16;

generate_proposals(anchors, 16, in_pad, out, prob_threshold, objects16);

proposals.insert(proposals.end(), objects16.begin(), objects16.end());

}

{

ncnn::Mat out;

ex.extract("791", out);

ncnn::Mat anchors(6);

anchors[0] = 116.f;

anchors[1] = 90.f;

anchors[2] = 156.f;

anchors[3] = 198.f;

anchors[4] = 373.f;

anchors[5] = 326.f;

std::vector<Object> objects32;

generate_proposals(anchors, 32, in_pad, out, prob_threshold, objects32);

proposals.insert(proposals.end(), objects32.begin(), objects32.end());

}

// sort all proposals by score from highest to lowest

qsort_descent_inplace(proposals);

std::vector<int> picked;

nms_sorted_bboxes(proposals, picked, nms_threshold);

int count = picked.size();

objects.resize(count);

for (int i = 0; i < count; i++)

{

objects[i] = proposals[picked[i]];

float x0 = (objects[i].x - (wpad / 2)) / scale;

float y0 = (objects[i].y - (hpad / 2)) / scale;

float x1 = (objects[i].x + objects[i].w - (wpad / 2)) / scale;

float y1 = (objects[i].y + objects[i].h - (hpad / 2)) / scale;

// clip

x0 = std::max(std::min(x0, (float)(width - 1)), 0.f);

y0 = std::max(std::min(y0, (float)(height - 1)), 0.f);

x1 = std::max(std::min(x1, (float)(width - 1)), 0.f);

y1 = std::max(std::min(y1, (float)(height - 1)), 0.f);

objects[i].x = x0;

objects[i].y = y0;

objects[i].w = x1;

objects[i].h = y1;

}

}

return 0;

}

}

static const char* class_names[] = {"hand"};

void draw_face_box(cv::Mat& bgr, std::vector<Object> object)

{

for (int i = 0; i < object.size(); i++)

{

const auto obj = object[i];

cv::rectangle(bgr, cv::Point(obj.x, obj.y), cv::Point(obj.w, obj.h), cv::Scalar(0, 255, 0), 3, 8, 0);

std::cout << "label:" << class_names[obj.label] << std::endl;

string emoji_path = "emoji\\" + string(class_names[obj.label]) + ".png";

cv::Mat logo = cv::imread(emoji_path);

if (logo.empty()) {

std::cout << "imread logo failed!!!" << std::endl;

return;

}

resize(logo, logo, cv::Size(80, 80));

cv::Mat imageROI = bgr(cv::Range(obj.x, obj.x + logo.rows), cv::Range(obj.y, obj.y + logo.cols));

logo.copyTo(imageROI);

}

}

static int detect_resnet(const cv::Mat& bgr,std::vector<float>& output) {

ncnn::Mat in = ncnn::Mat::from_pixels_resize(bgr.data,ncnn::Mat::PIXEL_RGB,bgr.cols,bgr.rows,256,256);

const float mean_vals[3] = { 104.f,117.f,123.f };//

const float norm_vals[3] = { 1/255.f, 1/255.f, 1/255.f };//1/255.f

in.substract_mean_normalize(mean_vals, norm_vals); //0 mean_vals, norm_vals

ncnn::Extractor ex = hand_keyPoints.create_extractor();

ex.input("input", in);

ncnn::Mat out;

ex.extract("output",out);

std::cout << "out.w:" << out.w <<" out.h: "<< out.h <<std::endl;

output.resize(out.w);

for (int i = 0; i < out.w; i++) {

output[i] = out[i];

}

return 0;

}

float vector_2d_angle(cv::Point p1,cv::Point p2) {

//求解二维向量的角度

float angle = 0.0;

try {

float radian_value = acos((p1.x*p2.x+p1.y*p2.y)/(sqrt(p1.x*p1.x+p1.y*p1.y)*sqrt(p2.x*p2.x+p2.y*p2.y)));

angle = 180*radian_value/3.1415;

}catch(...){

angle = 65535.;

}

if (angle > 180.) {

angle = 65535.;

}

return angle;

}

std::vector<float> hand_angle(std::vector<int>& hand_x,std::vector<int>& hand_y) {

//获取对应手相关向量的二维角度,根据角度确定手势

float angle = 0.0;

std::vector<float> angle_list;

//------------------- thumb 大拇指角度

angle = vector_2d_angle(cv::Point((hand_x[0]-hand_x[2]),(hand_y[0]-hand_y[2])),cv::Point((hand_x[3]-hand_x[4]),(hand_y[3]-hand_y[4])));

angle_list.push_back(angle);

//--------------------index 食指角度

angle = vector_2d_angle(cv::Point((hand_x[0] - hand_x[6]), (hand_y[0] - hand_y[6])), cv::Point((hand_x[7] - hand_x[8]), (hand_y[7] - hand_y[8])));

angle_list.push_back(angle);

//---------------------middle 中指角度

angle = vector_2d_angle(cv::Point((hand_x[0] - hand_x[10]), (hand_y[0] - hand_y[10])), cv::Point((hand_x[11] - hand_x[12]), (hand_y[11] - hand_y[12])));

angle_list.push_back(angle);

//----------------------ring 无名指角度

angle = vector_2d_angle(cv::Point((hand_x[0] - hand_x[14]), (hand_y[0] - hand_y[14])), cv::Point((hand_x[15] - hand_x[16]), (hand_y[15] - hand_y[16])));

angle_list.push_back(angle);

//-----------------------pink 小拇指角度

angle = vector_2d_angle(cv::Point((hand_x[0] - hand_x[18]), (hand_y[0] - hand_y[18])), cv::Point((hand_x[19] - hand_x[20]), (hand_y[19] - hand_y[20])));

angle_list.push_back(angle);

return angle_list;

}

string h_gestrue(std::vector<float>& angle_lists) {

//二维约束的方式定义手势

//fist five gun love one six three thumbup yeah

float thr_angle = 65.;

float thr_angle_thumb = 53.;

float thr_angle_s = 49.;

string gesture_str;

bool flag = false;

for (int i = 0; i < angle_lists.size(); i++) {

if (abs(65535 - int(angle_lists[i])) > 0) {

flag = true; //进入手势判断标识

}

}

std::cout << "flag:" << flag << std::endl;

if (flag) {

if (angle_lists[0] > thr_angle_thumb && angle_lists[1] > thr_angle

&& angle_lists[2] > thr_angle && angle_lists[3] > thr_angle

&& angle_lists[4] > thr_angle) {

gesture_str = "fist";

}

else if (angle_lists[0] < thr_angle_s && angle_lists[1] < thr_angle_s

&& angle_lists[2] < thr_angle_s && angle_lists[3] < thr_angle_s

&& angle_lists[4] < thr_angle_s) {

gesture_str = "five";

}

else if(angle_lists[0] < thr_angle_s && angle_lists[1] < thr_angle_s

&& angle_lists[2] > thr_angle && angle_lists[3] > thr_angle

&& angle_lists[4] > thr_angle){

gesture_str = "gun";

}

else if (angle_lists[0] < thr_angle_s && angle_lists[1] < thr_angle_s

&& angle_lists[2] > thr_angle && angle_lists[3] > thr_angle

&& angle_lists[4] < thr_angle_s) {

gesture_str = "love";

}

else if (angle_lists[0] < 5 && angle_lists[1] < thr_angle_s

&& angle_lists[2] > thr_angle && angle_lists[3] > thr_angle

&& angle_lists[4] > thr_angle) {

gesture_str = "one";

}

else if (angle_lists[0] < thr_angle_s && angle_lists[1] > thr_angle

&& angle_lists[2] > thr_angle && angle_lists[3] > thr_angle

&& angle_lists[4] < thr_angle_s) {

gesture_str = "six";

}

else if (angle_lists[0] > thr_angle_thumb && angle_lists[1] < thr_angle_s

&& angle_lists[2] < thr_angle_s && angle_lists[3] < thr_angle_s

&& angle_lists[4] > thr_angle) {

gesture_str = "three";

}

else if (angle_lists[0] < thr_angle_s && angle_lists[1] > thr_angle

&& angle_lists[2] > thr_angle && angle_lists[3] > thr_angle

&& angle_lists[4] > thr_angle) {

gesture_str = "thumbUp";

}

else if (angle_lists[0] > thr_angle_thumb && angle_lists[1] < thr_angle_s

&& angle_lists[2] < thr_angle_s && angle_lists[3] > thr_angle

&& angle_lists[4] > thr_angle) {

gesture_str = "two";

}

}

return gesture_str;

}

int main()

{

Mat frame;

VideoCapture capture(0);

init();

init_handKeyPoint();

while (true)

{

capture >> frame;

if (!frame.empty()) {

std::vector<Object> objects;

detect(frame, objects);

std::vector<float> hand_output;

for (int j = 0; j < objects.size(); ++j) {

cv::Mat handRoi;

int x, y, w, h;

try {

x = (int)objects[j].x < 0 ? 0 : (int)objects[j].x;

y = (int)objects[j].y < 0 ? 0 : (int)objects[j].y;

w = (int)objects[j].w < 0 ? 0 : (int)objects[j].w;

h = (int)objects[j].h < 0 ? 0 : (int)objects[j].h;

if (w > frame.cols){

w = frame.cols;

}

if (h > frame.rows) {

h = frame.rows;

}

}

catch (cv::Exception e) {

}

//把手区域向外扩30个像素

x = max(0, x - 30);

y = max(0, y - 30);

int w_ = min(w - x + 30, 640);

int h_ = min(h - y + 30, 480);

cv::Rect roi(x,y,w_,h_);

handRoi = frame(roi);

cv::resize(handRoi,handRoi,cv::Size(256,256));

//detect_resnet(handRoi, hand_output);

detect_resnet(handRoi, hand_output);

std::vector<float> angle_lists;

string gesture_string;

std::vector<int> hand_points_x; //

std::vector<int> hand_points_y;

for (int k = 0; k < hand_output.size()/2; k++) {

int x = int(hand_output[k * 2 + 0] * handRoi.cols);//+int(roi.x)-1;

int y = int(hand_output[k * 2 + 1] * handRoi.rows);// +int(roi.y) - 1;

//x1 = x1 < 0 ? abs(x1) : x1;

//x2 = x2 < 0 ? abs(x2) : x2;

hand_points_x.push_back(x);

hand_points_y.push_back(y);

std::cout << "x1: " << x << " x2: " << y << std::endl;

cv::circle(handRoi, cv::Point(x,y), 3, (0, 255, 0), 3);

cv::circle(handRoi, cv::Point(x,y), 3, (0, 255, 0), 3);

}

cv::line(handRoi, cv::Point(hand_points_x[0], hand_points_y[0]), cv::Point(hand_points_x[1], hand_points_y[1]), cv::Scalar(255, 0, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[1], hand_points_y[1]), cv::Point(hand_points_x[2], hand_points_y[2]), cv::Scalar(255, 0, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[2], hand_points_y[2]), cv::Point(hand_points_x[3], hand_points_y[3]), cv::Scalar(255, 0, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[3], hand_points_y[3]), cv::Point(hand_points_x[4], hand_points_y[4]), cv::Scalar(255, 0, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[0], hand_points_y[0]), cv::Point(hand_points_x[5], hand_points_y[5]), cv::Scalar(0, 255, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[5], hand_points_y[5]), cv::Point(hand_points_x[6], hand_points_y[6]), cv::Scalar(0, 255, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[6], hand_points_y[6]), cv::Point(hand_points_x[7], hand_points_y[7]), cv::Scalar(0, 255, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[7], hand_points_y[7]), cv::Point(hand_points_x[8], hand_points_y[8]), cv::Scalar(0, 255, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[0], hand_points_y[0]), cv::Point(hand_points_x[9], hand_points_y[9]), cv::Scalar(0, 0, 255), 3);

cv::line(handRoi, cv::Point(hand_points_x[9], hand_points_y[9]), cv::Point(hand_points_x[10], hand_points_y[10]), cv::Scalar(0, 0, 255), 3);

cv::line(handRoi, cv::Point(hand_points_x[10], hand_points_y[10]), cv::Point(hand_points_x[11], hand_points_y[11]), cv::Scalar(0, 0, 255), 3);

cv::line(handRoi, cv::Point(hand_points_x[11], hand_points_y[11]), cv::Point(hand_points_x[12], hand_points_y[12]), cv::Scalar(0, 0, 255), 3);

cv::line(handRoi, cv::Point(hand_points_x[0], hand_points_y[0]), cv::Point(hand_points_x[13], hand_points_y[13]), cv::Scalar(255, 0, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[13], hand_points_y[13]), cv::Point(hand_points_x[14], hand_points_y[14]), cv::Scalar(255, 0, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[14], hand_points_y[14]), cv::Point(hand_points_x[15], hand_points_y[15]), cv::Scalar(255, 0, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[15], hand_points_y[15]), cv::Point(hand_points_x[16], hand_points_y[16]), cv::Scalar(255, 0, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[0], hand_points_y[0]), cv::Point(hand_points_x[17], hand_points_y[17]), cv::Scalar(0, 255, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[17], hand_points_y[17]), cv::Point(hand_points_x[18], hand_points_y[18]), cv::Scalar(0, 255, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[18], hand_points_y[18]), cv::Point(hand_points_x[19], hand_points_y[19]), cv::Scalar(0, 255, 0), 3);

cv::line(handRoi, cv::Point(hand_points_x[19], hand_points_y[19]), cv::Point(hand_points_x[20], hand_points_y[20]), cv::Scalar(0, 255, 0), 3);

angle_lists = hand_angle(hand_points_x, hand_points_y);

gesture_string = h_gestrue(angle_lists);

std::cout << "getsture_string:" << gesture_string << std::endl;

cv::putText(handRoi,gesture_string,cv::Point(30,30),cv::FONT_HERSHEY_COMPLEX,1, cv::Scalar(0, 255, 255), 1, 1, 0);

cv::imshow("handRoi", handRoi);

cv::waitKey(10);

angle_lists.clear();

hand_points_x.clear();

hand_points_y.clear();

}

}

if (cv::waitKey(20) == 'q')

break;

}

capture.release();

return 0;

}